The outbox pattern for messaging

The problem

Service A needs to update its own database and publish a message to service B.

The system needs to stay consistent as much as possible. By consistent, we mean that if service A has updated its database, service B should receive a message. If service A did not update its database then service B should not receive a message.

Sounds straightforward. We can have an implementation like this:

const doWork = async () => {

await updateDB();

await publishMessage();

};Let’s evaluate this implementation.

updateDBandpublishMessageare two separate operations.updateDBandpublishMessagecan fail independently.- If

updateDBfails, service A’s database is not updated and service B does not get a message. Our system stays consistent. - If

updateDBsucceeds andpublishMessagefails, service A’s database is updated and service B does not get a message. Uh oh, our system is no longer consistent.

What if we swapped the order?

const doWork = async () => {

await publishMessage();

await updateDB();

};- If

publishMessagefails, service B does not get a message and service A’s database is not updated. Our system stays consistent. - If

publishMessagesucceeds andupdateDBfails, service B gets a message and service A’s database is not updated. Uh oh, our system is no longer consistent.

This problem is known as the dual-write problem.

The insight

- We need to make

updateDBandpublishMessagebecome one operation. That way, the system stays consistent. - The primary operation must be

updateDB, because service B is merely reacting to something that happened in service A. - In practice, it matters little to the user if service B reacts to service A instantly or 10 seconds later. The important thing is that service B eventually reacts to service A. As the saying goes, “better late than never”.

- We can break down

publishMessageinto the intent and the execution. As we performupdateDB, we can store the intention topublishMessageas a row in some database table. - We can add another component to execute the intent later on.

A solution

This solution is known as the transactional outbox.

- We store our intention to

publishMessagein anoutboxtable. - We add a worker to poll the

outboxtable for any unprocessed rows. This worker is called a relay. - The relay processes a row by forwarding the message to its intended destination.

- The relay marks the row as completed after forwarding the message.

A real life case study

The context

At $startup, we had two services that needed to communicate with each other for two reasons:

- Service A needed to dispatch work to service B.

- Service B needed to report back on the result of work dispatched by service A.

The correctness of the system depended on messaging reliability. If service B failed to report back to service A with the work status, it would have appeared to our users as if the system was “stuck”.

We had several constraints which limited our options.

- There was heavy pressure from the business to ship the entire platform fast, in the span of weeks.

- All engineers knew how to build APIs, but few had worked with message queues.

- We could not provision a message queue because we were in the middle of migrating between cloud providers. Therefore, we needed a solution that worked locally so that engineers could test their work.

The solution

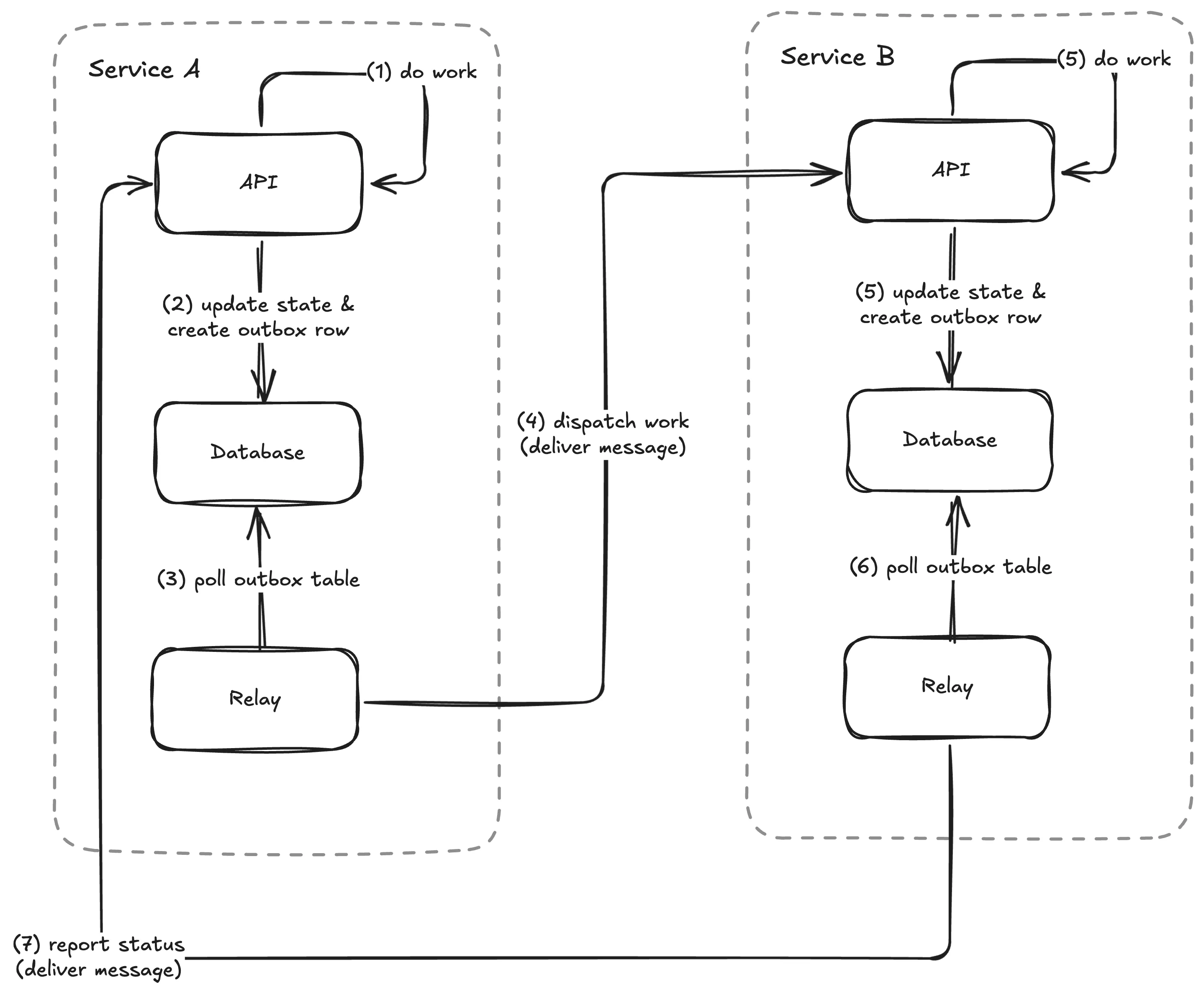

With all these constraints, we opted for a push-based HTTP solution.

- Service A and B would implement relay workers on their own.

- Service A would dispatch work to service B via an API.

- Service B would report back with the result via an API.

- The relays would attempt to deliver a message up to N times. When a message exceeded the number of attempts, the message would be forwarded to a dead-letter queue for later inspection.

What went well?

- Engineers implemented and tested their work locally with Docker compose.

- We did not have to deploy new dependencies. The push-based HTTP solution used existing databases.

- The dead-letter queue allowed engineers to verify if messages were successfully sent.

- Both services eventually migrated from push-based HTTP to dedicated message queues with minimal changes. Engineers only needed to update the relays to push to the message queue.

What challenges did we encounter?

- We had to ensure that recipients were idempotent and could discard stale messages. The relay determines message delivery and ordering guarantees. In our relay implementation, we provided at-least once delivery with no strict ordering because those were the simplest guarantees we could build.

- Each service had its own relay implementation with different reliability and ordering guarantees. A generic relay that could run as a sidecar would have simplified adoption of the outbox pattern.

- Early on, I advocated for separate outbox tables for each message type. Separate outbox tables led to a lot of duplicate work as engineers had to re-implement the relay for each outbox table. It also led to extra load on the database as each relay polls its respective outbox table. Given the choice, I would advocate for a central outbox table instead.